The actual usage is out of scope of this post.

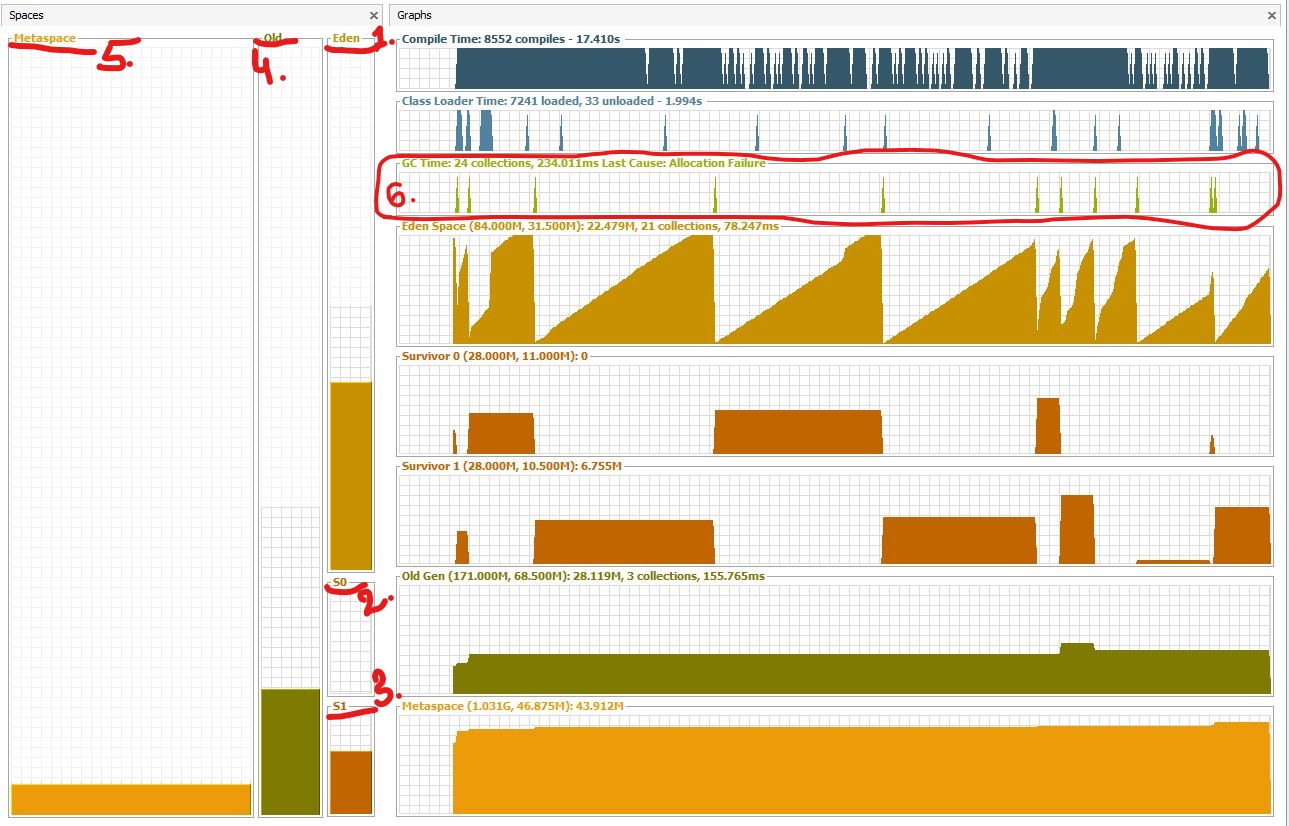

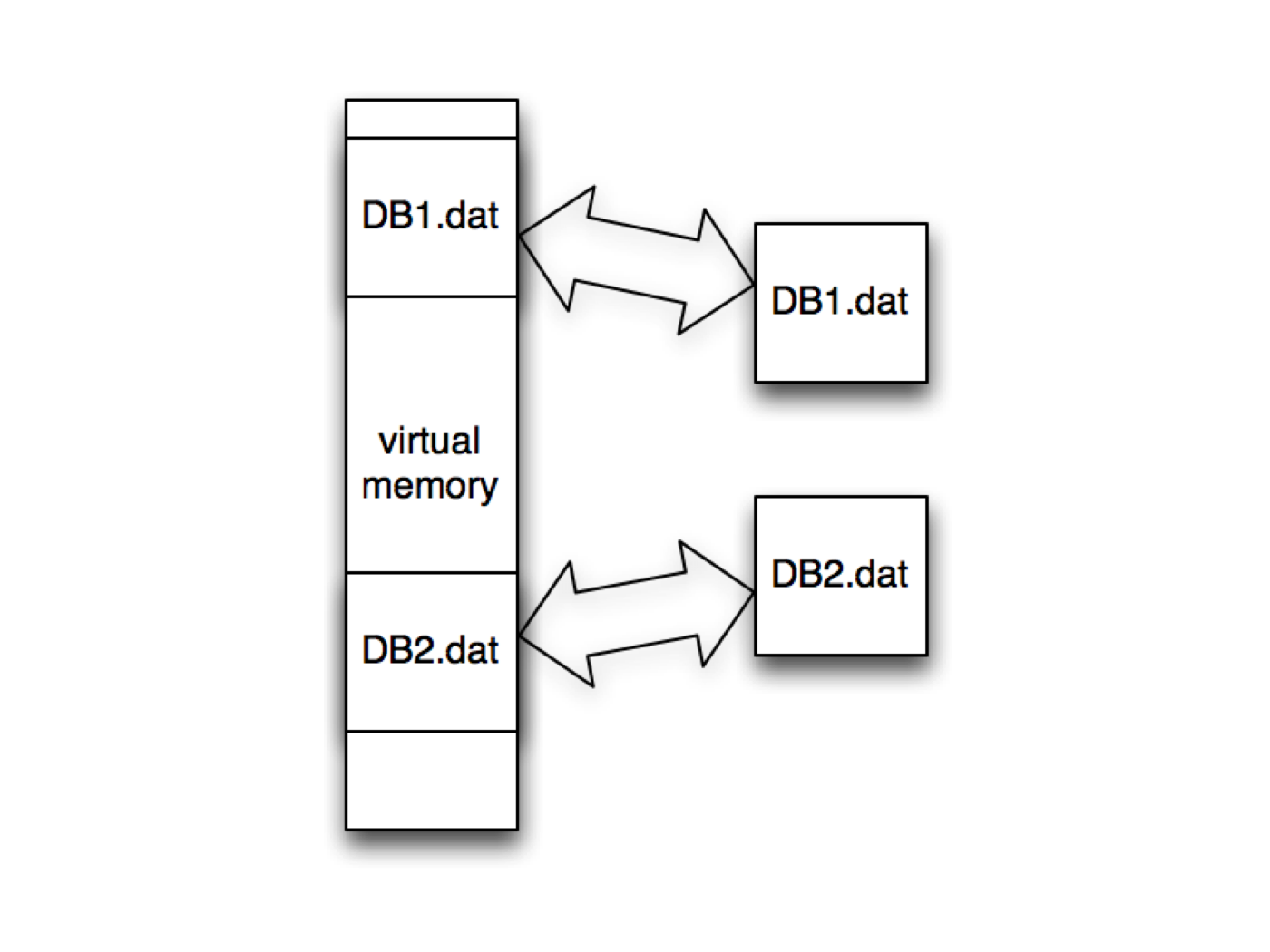

It’s actually a variation of DirectByteBuffer though there’s no direct relationship between classes. The corresponding class in Java is MappedByteBuffer from NIO package. Hence, if performance is this critical, benchmark is always needed as abusing mmap() may yield worse performance than simply doing the copy. However, nothing comes for free - while mmap does avoid that extra copy, it doesn’t guarantee the code will always be faster - depending on the OS implementation, there may be quite a bit of setup and teardown overhead (since it needs to find the space and maintain it in the TLB and make sure to flush it after unmapping) and page fault gets much more expensive since kernel now needs to read from hardware (like disk) to update the memory space and TLB. But since OS maps certain chunk of file into memory, you get all benefits from OS virtual memory management - hot content can be intelligently cached efficiently, and all data are page-aligned thus no buffer copying is needed to write stuff back.

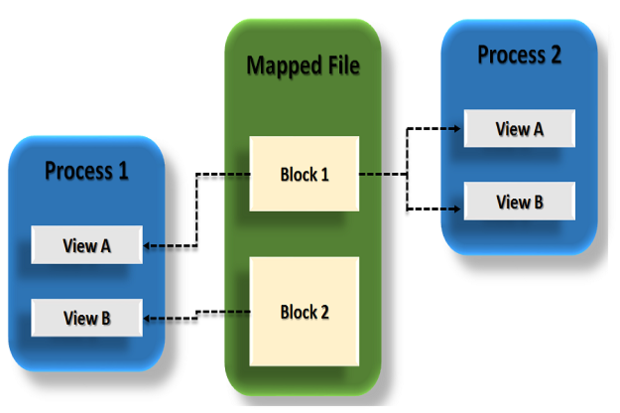

As a tradeoff, that will still involve 4 context switches. Mmap allows code to map file to kernel memory and access that directly as if it were in the application user space, thus avoiding the unnecessary copy. However, there’s a more expensive yet more useful approach - mmap, short for memory-map. The problem with the above zero-copy approach is that because there’s no user mode actually involved, code cannot do anything other than piping the stream.

Note: Java’s NIO offers this through transferTo ( doc). For example apache’s related doc can be found here but by default it’s off. However this is avoidable if the hardware supports scatter-n-gather:Ī lot of web servers do support zero-copy such as Tomcat and Apache. The reason why kernel needs to make a copy is because general hardware DMA access expects consecutive memory space (and hence the buffer). Yes but from OS’s perspective this is already zero-copy because there’s no data copied from kernel space to user space. You may say OS still has to make a copy of the data in kernel memory space. With that, the diagram would be like this: Honestly with such low-level feature, I wouldn’t trust Apple’s BSD-like system so never tested there. Some say some operating systems have broken versions of that with one of them being OSX link. Typically *nix systems will offer sendfile(). The actual implementation doesn’t really have a standard and is up to the OS how to achieve that. Zero copy can thus be used here to save the 2 extra copies. OS-level zero copy for the rescueĬlearly in this use case, the copy from/to user space memory is totally unnecessary because we didn’t do anything other than dumping data to a different socket. There are 4 context switches and 2 unnecessary copies for the above example. This would be fine if latency and throughput aren’t your service’s concern or bottleneck, but it would be annoying if you do care, say for a static asset server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed